Many of us have been in a situation where someone asks, “How do you translate … from English into …?”—paste in a phrase and your favorite language. A literal translation often does the trick: “Mark is smoking,” for example, in Italian might become “Marco sta fumando.” Most of the times, however, we have to ask for the context, because a literal translation is not immediately obvious. For instance, I can think of at least three ways to interpret “John got it” in Italiano (just check the entry get in a dictionary).

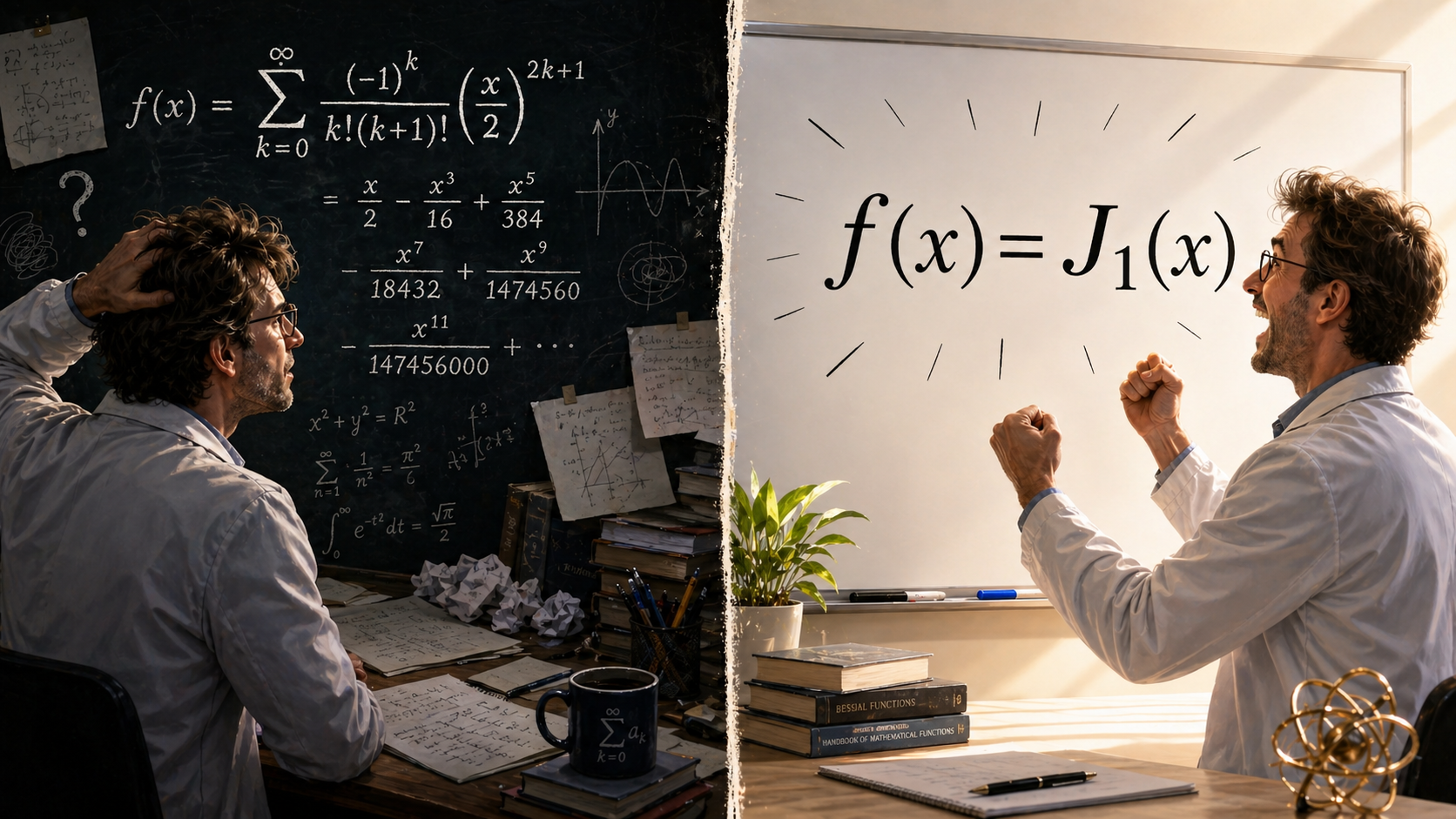

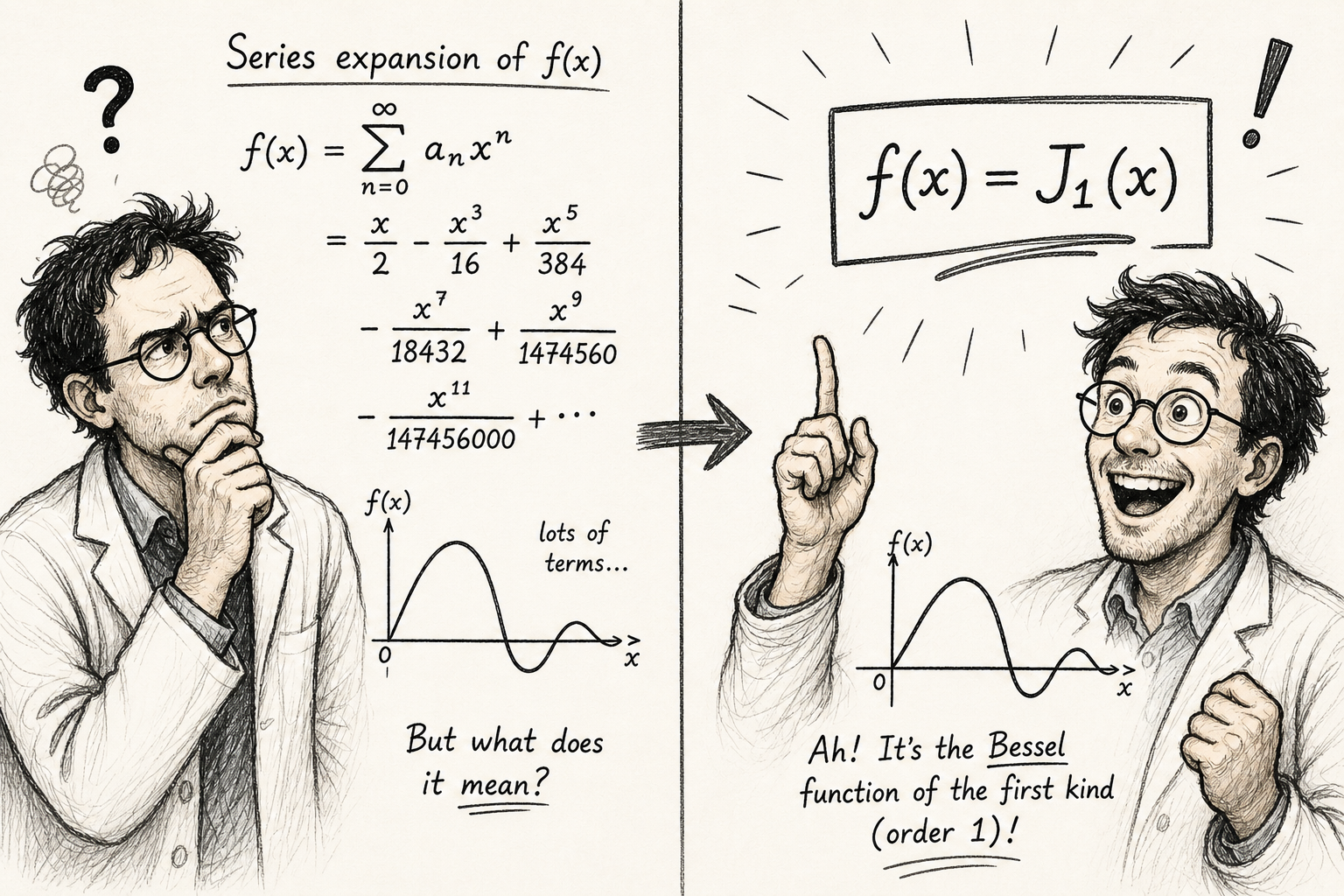

Likewise, mathematics is a language of physics, and sometimes we can translate it directly. An equation like surely rings a bell: a linear constitutive relation, such as Fick’s law or Ohm’s law. What about the expression Looks concise, but I frankly have no idea what this formula means—even if it happens to describe some data… Surely it is not obvious only to me 🙂↕️! Or not 🤔?

Physics is not just a loose bunch of formulas: it is the Big Picture of the material world in which all laws fit together—the context that makes a given equation interpretable (or not) without contradicting the rest. As theoreticians, we find concise expressions elegant. They resonate with Occam’s razor in the ideal realm of aesthetics and logic. But we can also deal with complex representations of physical laws, e.g. series expansions, as long as they remain necessary and interpretable.

In machine learning, however, model interpretability is indeed a real problem—the kind of problem the authors of SymTorch address. When we train a neural network, it may discover some laws, but it also encodes them in a mathematical representation, which appears to us about as transparent as binary machine code does to a Python programmer (regardless of what the program actually does). But that is an issue of mathematical representation—a language problem, in a sense—and a different story altogether.

Theoretical work often “percurs” many paths to a dead end before yielding one that “clicks.” Tools like SymTorch can of course unlock much of the potential hidden in data-driven modeling by suggesting plausible solutions. However, these tools do not necessarily make the discovered models physically interpretable right away.

The quote from Tan et al. (2026)[1] that triggered this post strikes me as an unfortunate rendering of the ideas of Kutz and Brunton (2022)[2], whom the authors of SymTorch cite. Kutz and Brunton, as I read their paper, never reduce physical interpretability to such a simple definition which, in my opinion, confuses the needs of physics with the problems of applied machine learning.

After all, if the goal is merely to make a formula concise—and Stephen Wolfram work harder,—one can always define a new special function 😁, such as the Bessel or Airy functions in celestial mechanics and optics.

Illustrations are generated with ChatGPT and Gemini; prompt and selection by RB.

Elizabeth S.Z. Tan, Adil Soubki, Miles Cranmer. SymTorch: A Framework for Symbolic Distillation of Deep Neural Networks. arXiv.2602.21307 (2026). ↩︎

Kutz and Brunton. Parsimony as the ultimate regularizer for physics-informed machine learning. Nonlinear Dyn. 107, p. 1801 (2022). ↩︎