Perhaps everyone knows that there are often (if not always) many paths to the same solution of a given mathematical problem. However, as one of my mentors (Stanislav K. Filatov) taught me: having many unrelated physical theories for the same thing is almost the same as having none 🙂. He also once remarked, in a lecture on the differences between Bragg’s and Laue’s derivations of X-ray diffraction in crystals, that sometimes even a “wrong” mathematical model leads to the right answer 😅. Indeed, the logical law of implication is often a blessing for theoreticians 😁!

Of course, the choice between models is obvious in some situations (for many possible reasons), yet quite often they compete, especially now, with the “advent” of machine learning. Should we simply search for the best scheme of a neural network and train it to solve all our problems 🙂? And why then do we still bother to interpret what neural networks actually learn?

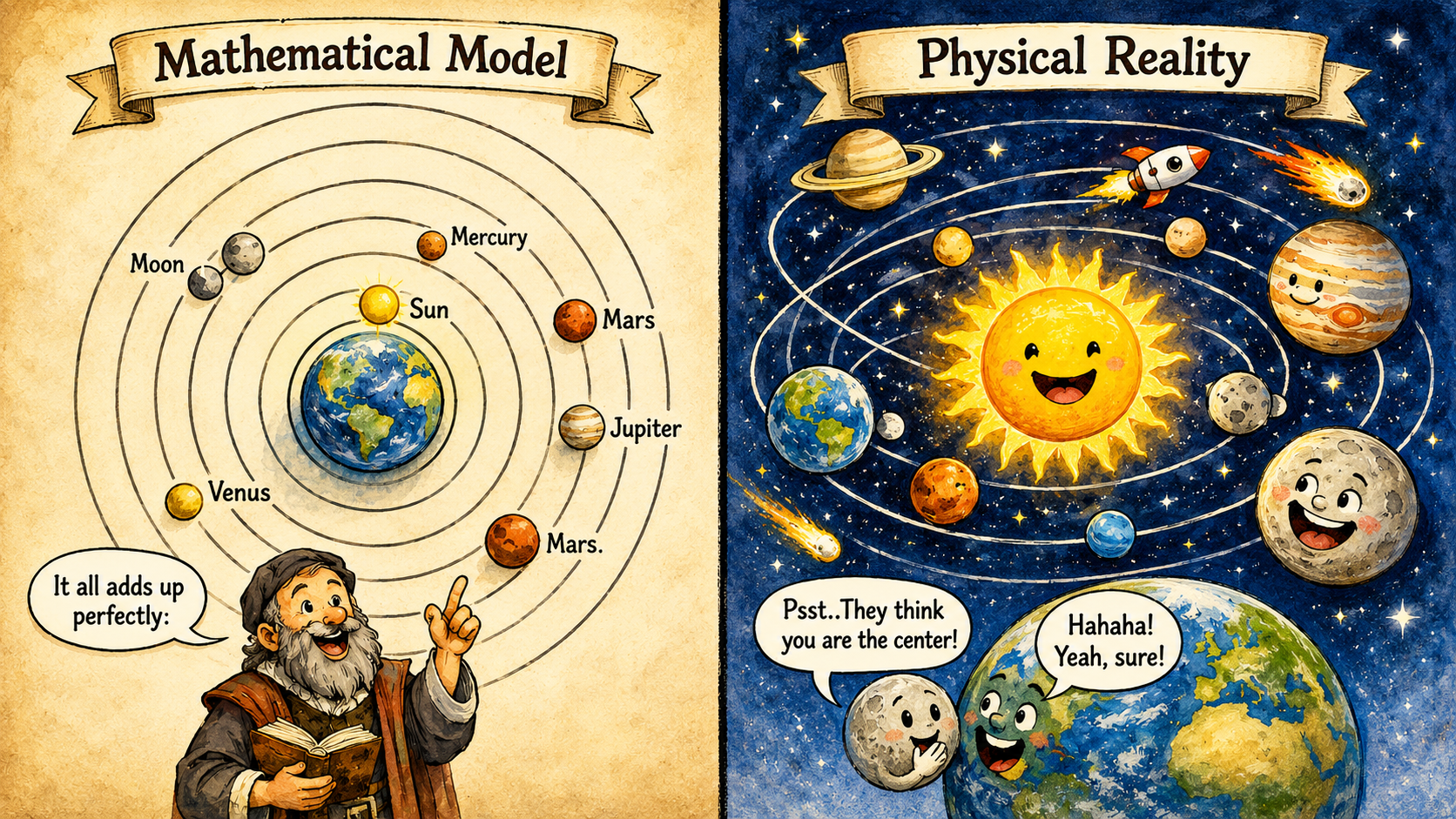

Just imagine, perhaps in a moment of madness 😅, that you trained a neural network on astronomical data and it learned the geocentric model of planetary motion (on the cover). To predict what happens in the sky, this model would most likely suffice—it can be mathematically precise down to a nuance. Sailors, after all, managed to navigate the seas long before anyone realized that most celestial bodies were not moving around Earth.

Today, we can even accurately describe how the motion of planets appears to an observer on Earth. Mathematics allows us to switch easily between points of view, sometimes exposing how different formulas are related. Sitting on a train, for example, we can describe the trees as “flying” past us in a co-moving reference frame. Yet an accurate formula alone does not always reveal what is cause and what is effect.

Likewise, the same formula can be approximated by many different series expansions. Neural networks typically swim in such a soup of redundant mathematical representations, from which they need only pick one. Sometimes, depending on how we train them, they pick a different one each time.

Returning to our “mad” example, suppose this time our network settled on the geocentric model. Suppose, moreover, that we could “read” the neural-networkian and understand exactly how it predicts the positions of the planets in that reference frame. Would we thereby unveil the cause of their motion—the law of gravity discovered by Newton?

Cover image is generated with ChatGPT; prompt and selection by RB.